Documentation Index

Fetch the complete documentation index at: https://docs.stably.ai/llms.txt

Use this file to discover all available pages before exploring further.

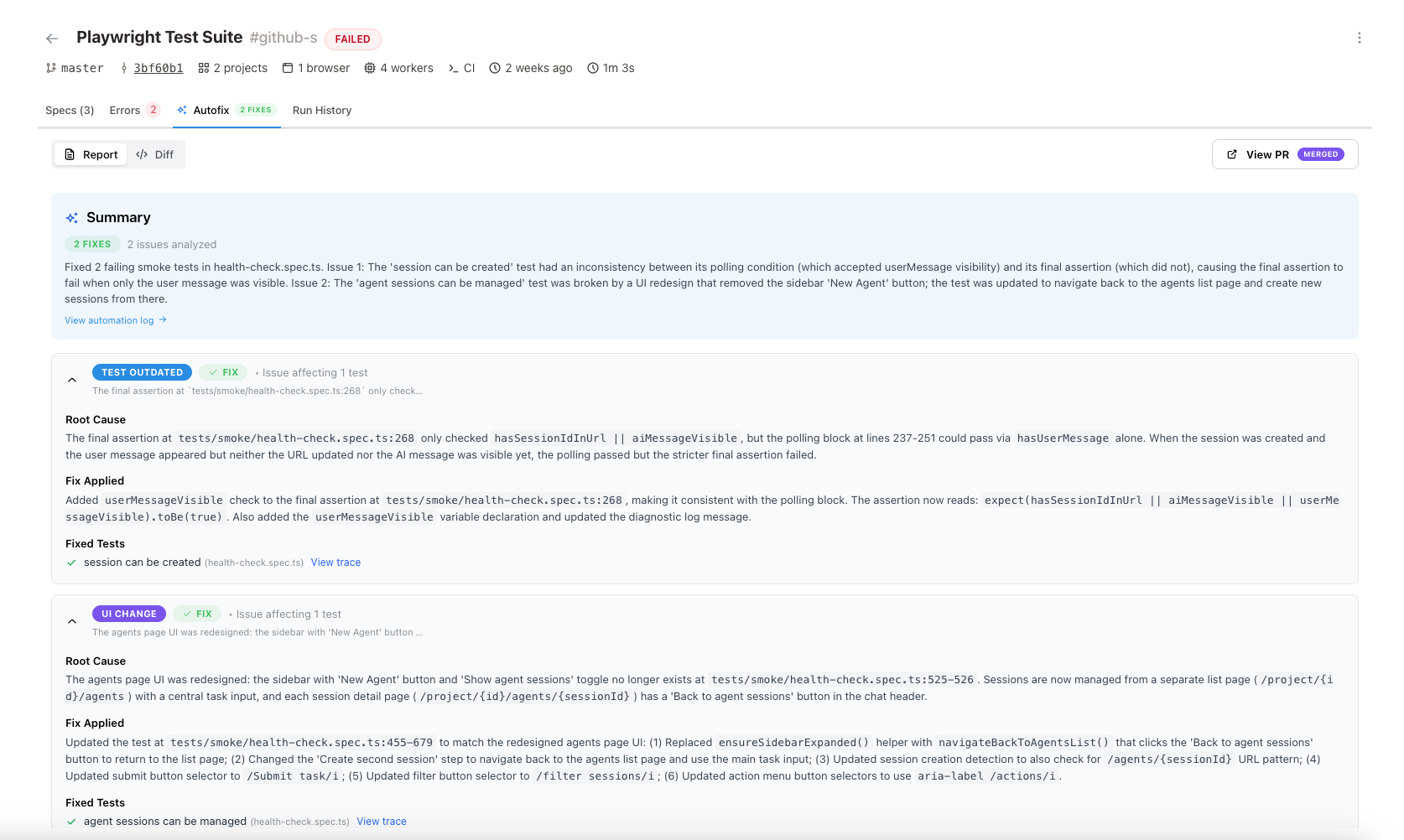

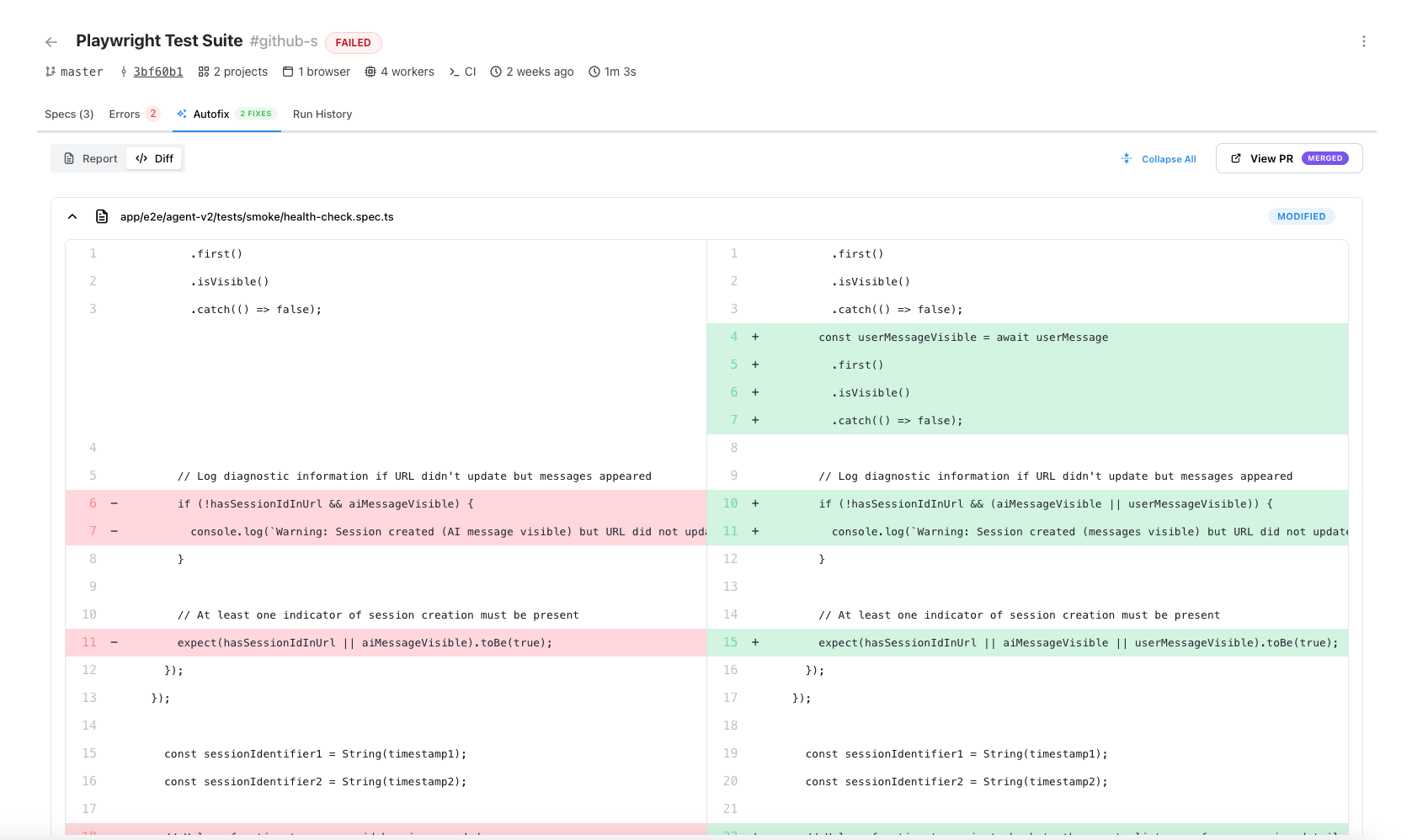

- Accurate triage — Compares past and current test run replays side-by-side to pinpoint exactly what changed. Digs into network requests, console logs, and DOM state to find the real root cause — especially effective at eliminating flaky tests.

- Scales to real workloads — Whether you have 5 failures or 500, Autofix spins up on-demand cloud browsers to validate every fix in parallel. Built for production, not demos.

- Every fix comes with proof — No vibe-test fixes. Every code change is re-run in a real browser, producing a full replay report with screenshots, traces, and DOM snapshots before anything reaches your codebase.

Quick Setup

- Stably CLI

- Stably Cloud

stably fix after any test execution:Ways to Use Autofix

Automatic (at trigger time)

Enableautofix: true as a project default in stably.yaml, per-schedule, or pass it when triggering a run via the API or dashboard UI. Autofix runs automatically on Stably Cloud after test failures — no manual step needed.

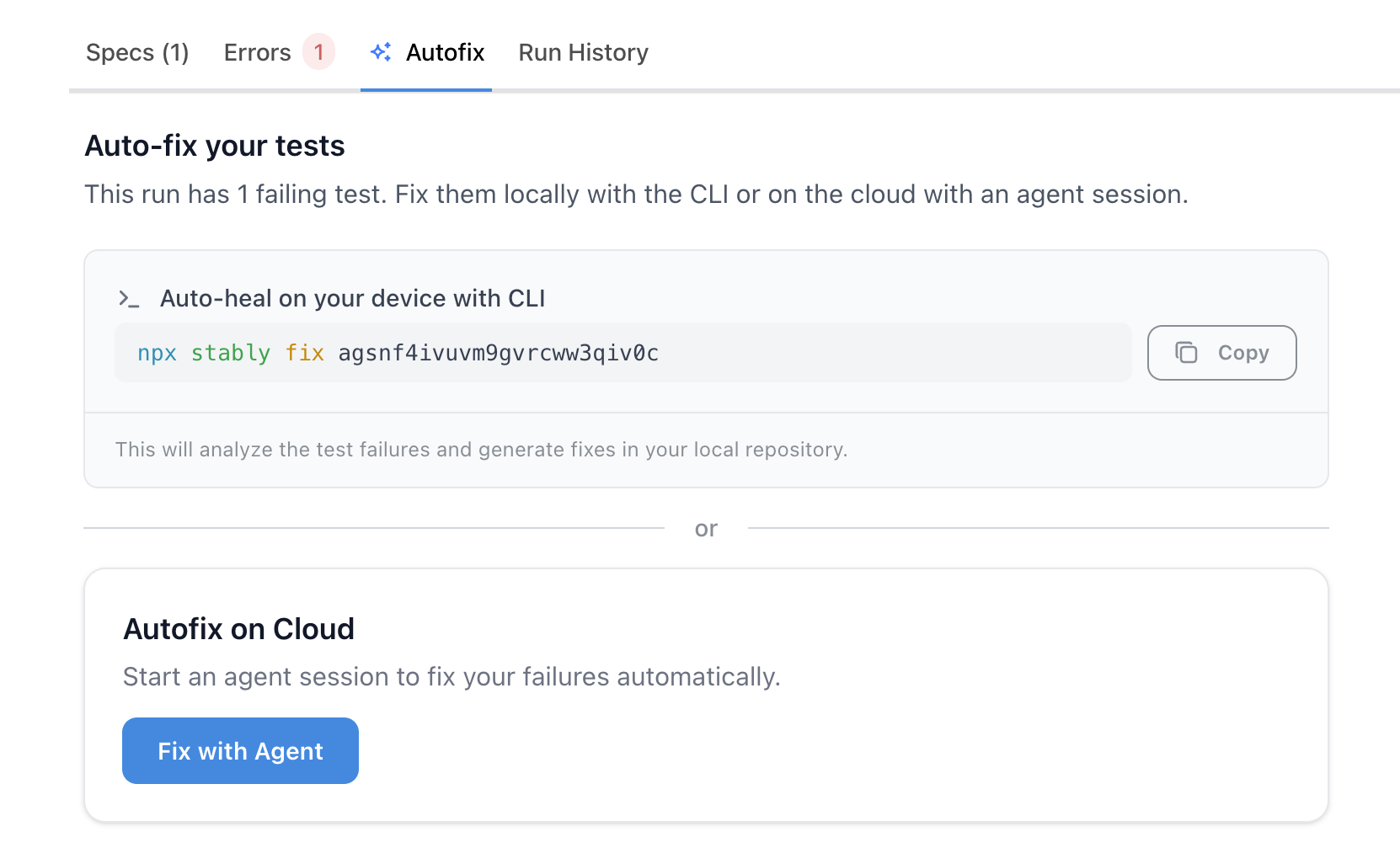

On-demand (after any failed run)

After any run completes with failures, the Autofix tab on the run details page presents two options — choose whichever fits your workflow:- Autofix on Cloud — Click “Fix with Agent” to start a cloud agent session. The agent diagnoses failures and generates fixes on Stably infrastructure; at the end you can create a PR (if your repo is connected).

- Auto-heal on your device with CLI — Copy the ready-to-run

npx stably fix <runId>command and run it locally. Fixes are applied to your working tree. Review withgit diff, commit when ready.

autofix was enabled at trigger time.

In CI

Add astably fix step after stably test in your pipeline. Results are always uploaded to the dashboard, where you can review diffs and create a PR (repo connected). Optionally, add git steps to commit/push directly from CI. See the CI Integration section for examples.

Comparison

| Automatic (at trigger) | On-demand: Cloud | On-demand: CLI | CLI in CI | |

|---|---|---|---|---|

| Trigger | autofix: true on schedule/API/UI | Click “Fix with Agent” on Autofix tab | Copy stably fix <runId> from Autofix tab | stably test || stably fix |

| Runs on | Stably cloud | Stably cloud | Your machine | Your CI runner |

| PR creation | Automatic (repo connected) | From dashboard (repo connected) | You commit manually | From dashboard (repo connected), or git steps in CI |

| Human trigger needed | No | Yes (click button) | Yes (run command) | No (wired into pipeline) |

What Autofix Can Fix

Tests needs update

Tests needs update

Actual bug in your app

Actual bug in your app

pricing.ts):Flaky / unstable tests

Flaky / unstable tests

How Autofix handles failing locators

When a locator fails, Autofix first tries to find the correct updated locator by navigating through your application in a live browser. It inspects the current page state, identifies what changed, and updates the selector in your test to match the new UI. If you have your repo connected, Autofix can also update thedata-testid in your application code alongside the test selector. You can customize this behavior through your STABLY.md file (e.g., prefer certain selector strategies, naming conventions).

Example: Updating a broken locator

Example: Updating a broken locator

page.getLocatorsByAI()) or an agent.act() call that uses natural language instead of brittle selectors.

Example: Replacing with an AI locator

Example: Replacing with an AI locator

How It Works

Triage

Fix

Validate in a real browser

Deliver fixes

- Cloud runs with connected repo: A PR/MR is created automatically. Review and merge when ready — Stably does not push to your repo until you merge.

- Cloud runs without connected repo: View diagnosis and code diffs in the dashboard. Apply changes to your codebase manually, or connect your repo to enable automatic PRs.

- CLI runs: Fixes are applied to local files. Results are always uploaded to the dashboard — review diffs and create a PR from there (repo connected). In CI, you can also add git commit/push/PR steps to your workflow if you prefer.

FAQ

Does Stably create a PR automatically?

Does Stably create a PR automatically?

- Cloud Runner with connected repo: Yes, automatically. Connecting your repo is not required for running tests or CLI usage — it’s only needed when you want Stably to open PRs/MRs from cloud or dashboard runs.

- Cloud Runner without connected repo: No — view diffs in the dashboard, apply manually.

- CLI: Fixes are applied to your local files and results are uploaded to the dashboard. If your repo is connected, you can create a PR from the dashboard. Otherwise, commit manually or add git steps in CI.

Do I need to trigger autofix manually?

Do I need to trigger autofix manually?

autofix: true(on schedule, API, or UI trigger) → fully automatic after failures, no separate trigger needed.- Autofix tab (post-run) → manual trigger. After any failed run, the Autofix tab offers both “Fix with Agent” (cloud) and a ready-to-copy

stably fixCLI command. Pick whichever you prefer. - CLI in CI → automatic if wired into your pipeline (

stably test || stably fix).

Where should I run "stably fix"?

Where should I run "stably fix"?

- Inside a git repository — required, since fix tracks changes via git.

- Usually from the same directory where you run

stably test. For non-standard layouts (monorepos, etc.), use--cwdor--configto point at the right project. - With

STABLY_API_KEYandSTABLY_PROJECT_IDenv vars set (or usestably login).

Can I run stably fix in each CI matrix/shard job?

Can I run stably fix in each CI matrix/shard job?

stably fix groups failures by root cause across all tests — running per-shard misses cross-test patterns and may cause conflicts.See the CI Integration section in the CLI guide for the correct matrix/sharding pattern.Can the CLI access tests created on the web portal?

Can the CLI access tests created on the web portal?

STABLY_PROJECT_ID to your web portal project ID — the CLI has access to all its tests and run history.What env vars does the fix step need?

What env vars does the fix step need?

- Always:

STABLY_API_KEY+STABLY_PROJECT_ID. - Plus: the same env vars your tests need (e.g.,

BASE_URL, test credentials) — fix re-runs tests to validate fixes. - Use

--env <name>to load a named environment from Stably, avoiding secret duplication.

Can I run "stably fix" on any failed run?

Can I run "stably fix" on any failed run?

stably fix <runId> from your repo directory. Works for local runs, CI runs, and cloud runs.Next Steps

Fix Tests (stably fix)

stably fix — run ID detection, CI integration, and monitoring